What is Serverless? | Serverless Vs Monolith | AWS Lambda

Exploring the Benefits of Serverless Computing and AWS Lambda

Introduction

Building APIs for backend services has traditionally involved setting up and managing servers on cloud platforms. However, serverless computing has emerged as a more efficient and cost-effective alternative. In this article, we will delve into serverless architecture, compare it with monolithic setups, and examine AWS Lambda — a serverless service provided by Amazon Web Services (AWS).

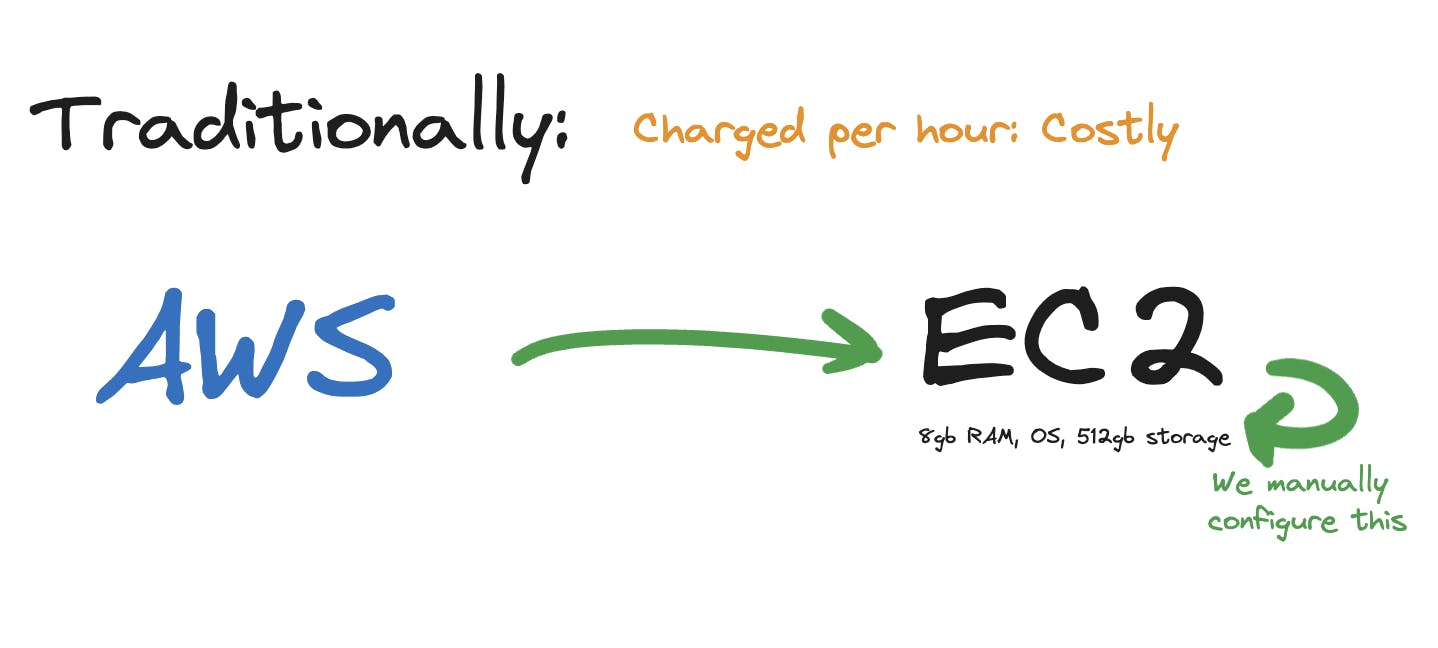

Understanding the Traditional Approach

In the traditional monolithic approach, we would provision a server on a cloud platform, such as AWS EC2. We would configure the server with specific resources, such as RAM and storage, install the required operating system, and manage all the necessary libraries and dependencies. Scaling up or down would also be our responsibility. With this approach, we would be charged per hour of server usage, which could become costly if there are periods of low traffic.

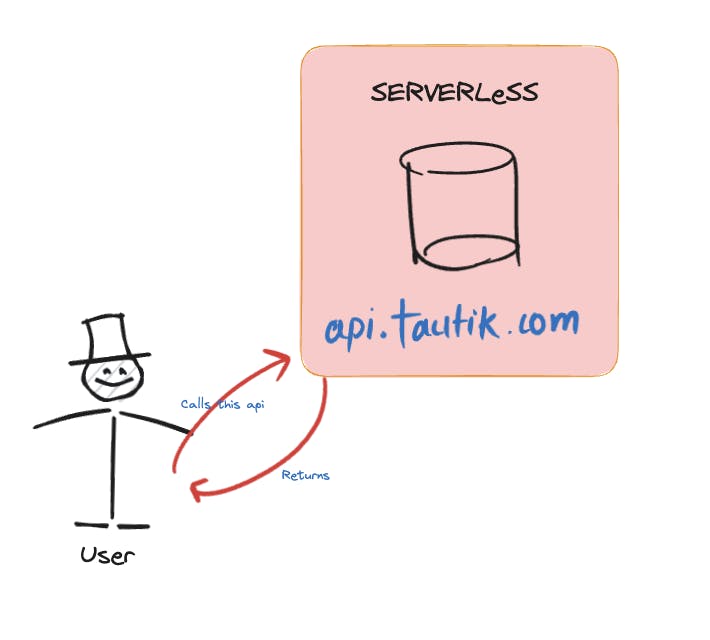

Introducing Serverless Architecture

Serverless architecture, on the other hand, simplifies the process by abstracting away the underlying infrastructure. With AWS Lambda, we can focus solely on writing the code for our API functions, while AWS takes care of managing the resources, such as provisioning the necessary compute power, allocating RAM, and scaling the infrastructure automatically. In a serverless setup, we are billed based on the number of invocations, making it more cost-effective as we only pay for what we use.

Exploring AWS Lambda

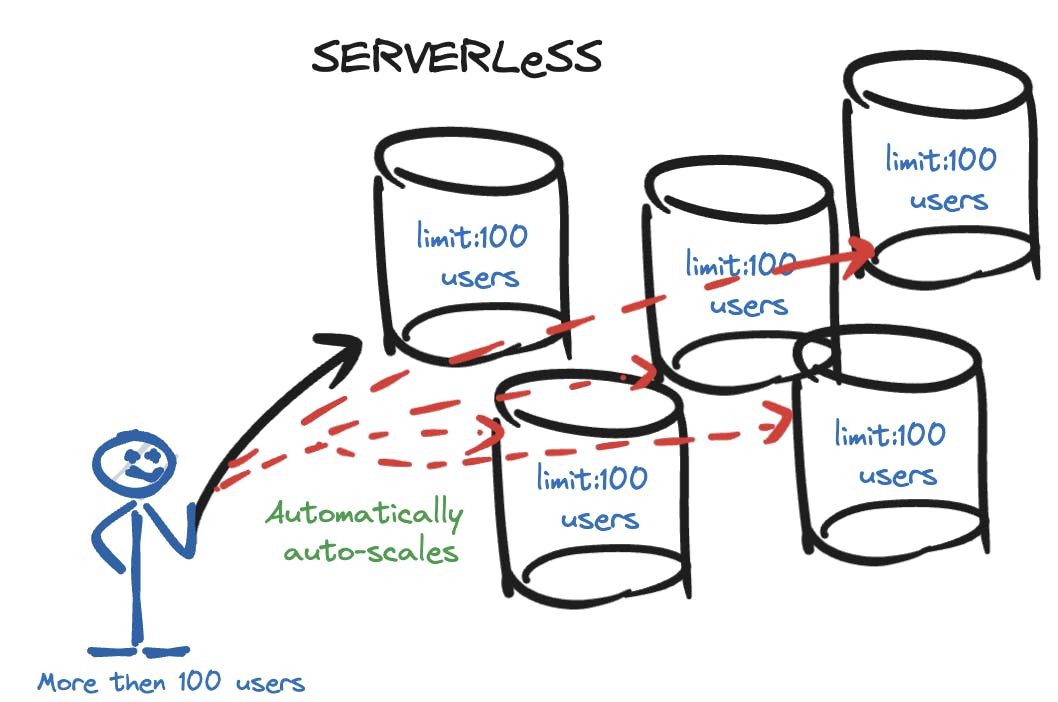

AWS Lambda is a popular serverless service provided by Amazon. It allows us to write functions in various supported programming languages, such as JavaScript, Python, or Java. These functions can be triggered by events, such as API calls or data changes, and AWS Lambda takes care of executing the code in parallel as needed. This auto-scaling capability ensures that our API can handle varying levels of traffic without manual intervention.

AWS Lambda gives us the end point from which user can call our server and thus charged on basis of invocation.

Thus it is comparatively cheap.

Challenges of Serverless Architecture

While serverless architecture offers numerous benefits, there are a few challenges to be aware of.

Cold Start Delays

Imagine we made use of the particular api since a long time, and thus try to

access it after a long time.

This this is the issue of cold start, where there might be a slight delay in executing a function if it hasn't been recently invoked. This delay can impact the response time of our API. It's important to optimize our functions and consider techniques like keeping functions warm or leveraging provisioned concurrency to mitigate cold start delays.

Stateless Design

Serverless functions are stateless by design, which means managing state becomes a challenge. External services like Redis are commonly used to overcome this limitation.

"Serverless functions excel at short-lived, stateless operations, but for maintaining state, external storage options like Redis or databases should be considered."

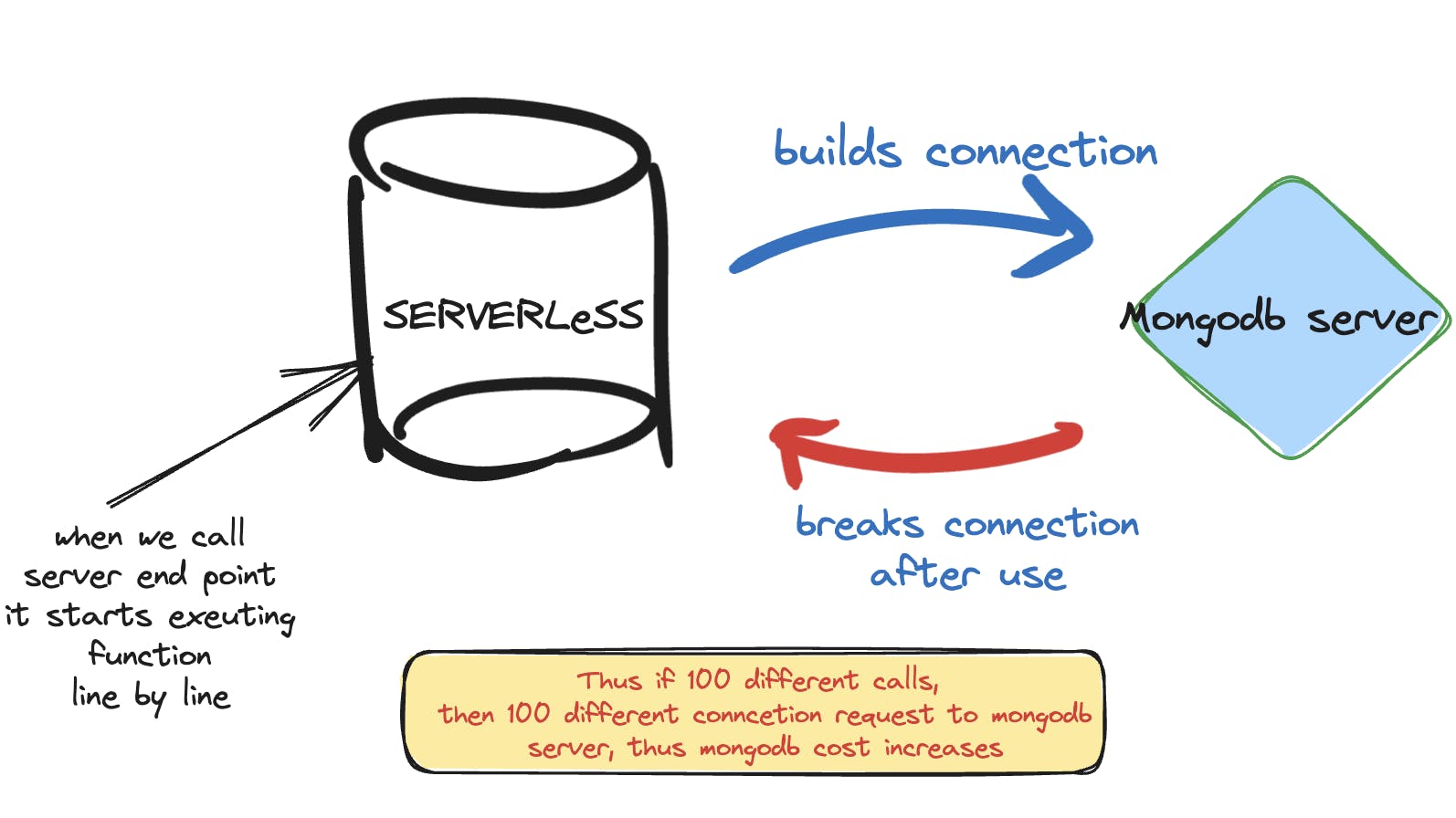

Concurrent Connections

There is a limit to the number of concurrent connections a function can handle, which can be a concern when dealing with high-traffic scenarios. Implementing a proxy layer or load balancing strategies can help manage these connections effectively.

Proper architecture planning and scaling strategies are necessary to handle concurrent connections in serverless environments.

Conclusion

Serverless architecture, exemplified by AWS Lambda, has revolutionized the way we build backend services. By abstracting away infrastructure management, it allows us to focus on writing code and delivering value. It offers auto-scaling, cost optimization, and reduced operational overhead. However, it also introduces certain challenges, such as cold start delays and managing state.

Understanding the trade-offs and choosing the right architecture for our specific use cases is key to leveraging the benefits of serverless computing.

By embracing serverless architecture and leveraging services like AWS Lambda, we can build scalable and cost-efficient APIs without the need for manual server management.

With proper planning and understanding of serverless best practices, we can create robust and reliable backend systems.

Thanks a lot for reading the article.

Hope you found it helpful.

Linkedin: https://www.linkedin.com/in/tautikk/

Email: tautikagrahari@gmail.com

Twitter: https://twitter.com/TautikA